With the emergence of ML and AI, there is an increase in the number of services using ML models in their app; thus, if there is a way to integrate those models in a lesser line of code, that would be very useful. We will be using Pycaret to achieve the same thing. We will be learning how to use Pycaret create_api() function for ML models.

What is Pycaret?

Pycaret is an open-source ML library. We can create an entire machine-learning pipeline in a few lines of code. It is a low-code library for this reason.

We can use this facility to spend less time coding and more time analyzing our data.

It reduces the prerequisites for machine learning, in a way reducing the cap of knowledge required for the application of machine learning.

Pycaret even automates feature engineering, imputing missing values, hyperparameter tuning, etc.

pip install pycaret[full]The above command will install pycaret along with its dependencies.

What are APIs:-

Using APIs, we can integrate the functionality of some other product or services with ours without knowing the nitty gritty of the services or without building their functionality from scratch, thus, saving a lot of time and money.

Parameters of Pycaret create_api():-

create_api(estimator, api_name:str, host:str = ‘127.0.0.1’, port:int = 8000).

We can use machine learning model names in the estimator, as it is the model we will apply over the data to create a prediction model.

We can give our API any name. We simply have to transfer a string in the api_name parameter of the function.

The host is where we want to host our ML model, and we can pass it as a string. Like:- ‘0.0.0.0’.

Port is an integer, and we can pass an integer on which port our ML model will be accessible. Like 9001, etc.

How to use Pycaret create_api():-

We can use create_api() in pycaret to create a machine-learning web API that other applications can use.

from pycaret.datasets import get_data

diamond_data = get_data('diamond')

from pycaret.classification import *

set = setup(data = diamond_data, target = 'Price')

model = create_model('lr')

create_api(model, 'lr_api')

To run the created API, we need to run the following command in the command line:-

In Ubuntu, we can use the following:-

!python lr_api.pyIn Windows, we need to install fastapi and uvicorn after the installation of pycaret, which can be installed using:-

pip install fastapi

pip install uvicornAfter which, we first need to run the script, and then, we can run the API in any of the IDLE prompts using:-

cd pycaret-fastapi

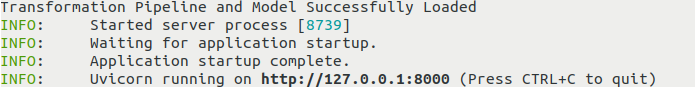

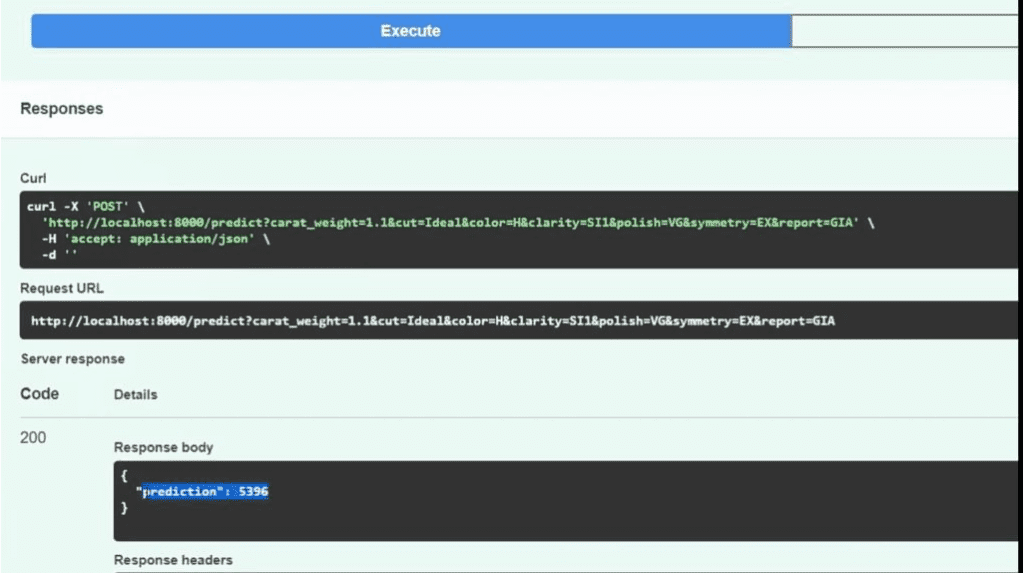

uvicorn lr_api:app --reloadAfter that, it goes live on the local host, and we can use it to predict the result of the target variable for the newer data points, as shown below in the diagram:-

After this, upon clicking on http://127.0.0.1:8000, at first it would show :-

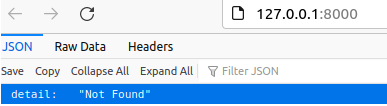

We need to search http://127.0.0.1:8000/docs to get to see form type structure:-

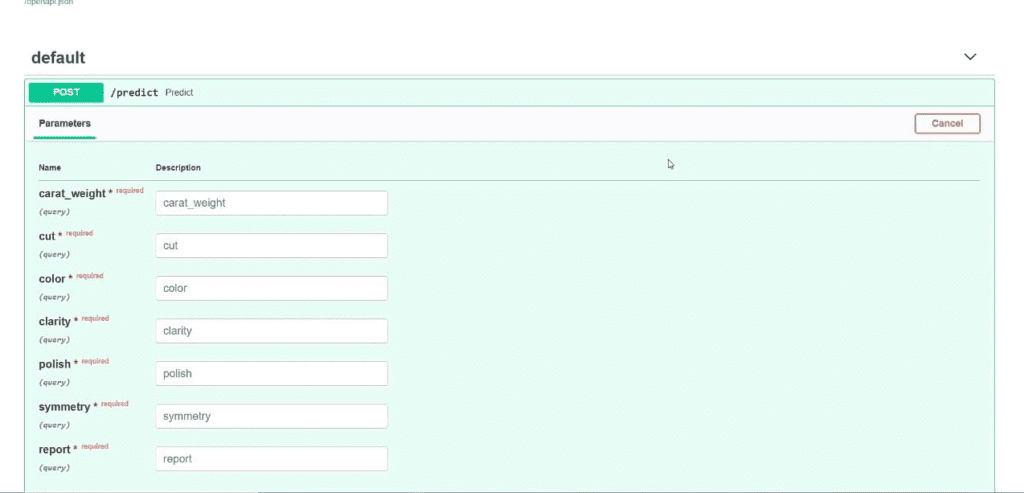

Upon clicking at Try it out, we can enter the values for our ML model:-

Upon filling the values and clicking on the execute button, we will get our response as follows:-

Now we can use the “requests” method in python to integrate this ML web functionality in our app using the URL, as shown in the above diagram. We can use the above request URL to access this model and can integrate its output in various ways in our application.

What makes pycaret special?

We can use Pycaret’s various functionalities to automate the Machine learning pipeline with just a few lines of code.

Using Pycaret Example 1:-

# loading dataset using get_data()

from pycaret.datasets import get_data

diabetes = get_data('diabetes')

# Setting up our data for further application of ML algorithms

from pycaret.classification import *

set = setup(data = diabetes, target = 'Class variable')

# creating a model

logistic_regression = create_model('lr’)

We retrieve the data using get_data(), which takes the dataset’s name as its attribute.

After which, we import everything from pycaret.classification and use the setup() function we prepare our data for further analysis like imputing missing values, doing exploratory data analysis, etc., all of which is done only in a single line of code.

The create_model() trains and evaluates how the given estimator in the above case ‘lr’ (logistic regression) works.

Using Pycaret Example 2:-

from pycaret.classification import *

set = setup(data = diabetes, target = 'Class variable')

# Applying multiple models

Best = compare_models()

The above example shows how multiple machine learning algorithms can be applied in just a single line of code using compare_models(), which would have otherwise taken tens of lines of code and supplies us with a grid of cross-validation scores of various estimators.

FAQs

Our api_name need not be the same as the name of the python_script; we have to pass the name of our API as a parameter in the form of a string to our create_api() function.

E.g., For- Our python script can be “ML_model.py”, and our api_name can be any string like “pycaret_ml_model.”

We change our host by passing a string representing our host as a parameter, like ‘127.0.0.1’ or ‘0.0.0.0’, etc. It need not be only ‘127.0.0.1’.

We can change our port in create_api() by sending an integer as a parameter at its respective place in the function. It can be any integer, only thing needs to be considered while picking a port is that it must be free. Otherwise, it will give a binding error.

It returns None, as it simply creates an API and gives us a way to access that API from the command line.

We can use it whenever we are running less on time or we need to reduce the development time and increase the time spent on Data analysis.

![[Fixed] typeerror can’t compare datetime.datetime to datetime.date](https://www.pythonpool.com/wp-content/uploads/2024/01/typeerror-cant-compare-datetime.datetime-to-datetime.date_-300x157.webp)

![[Fixed] nameerror: name Unicode is not defined](https://www.pythonpool.com/wp-content/uploads/2024/01/Fixed-nameerror-name-Unicode-is-not-defined-300x157.webp)

![[Solved] runtimeerror: cuda error: invalid device ordinal](https://www.pythonpool.com/wp-content/uploads/2024/01/Solved-runtimeerror-cuda-error-invalid-device-ordinal-300x157.webp)

![[Fixed] typeerror: type numpy.ndarray doesn’t define __round__ method](https://www.pythonpool.com/wp-content/uploads/2024/01/Fixed-typeerror-type-numpy.ndarray-doesnt-define-__round__-method-300x157.webp)